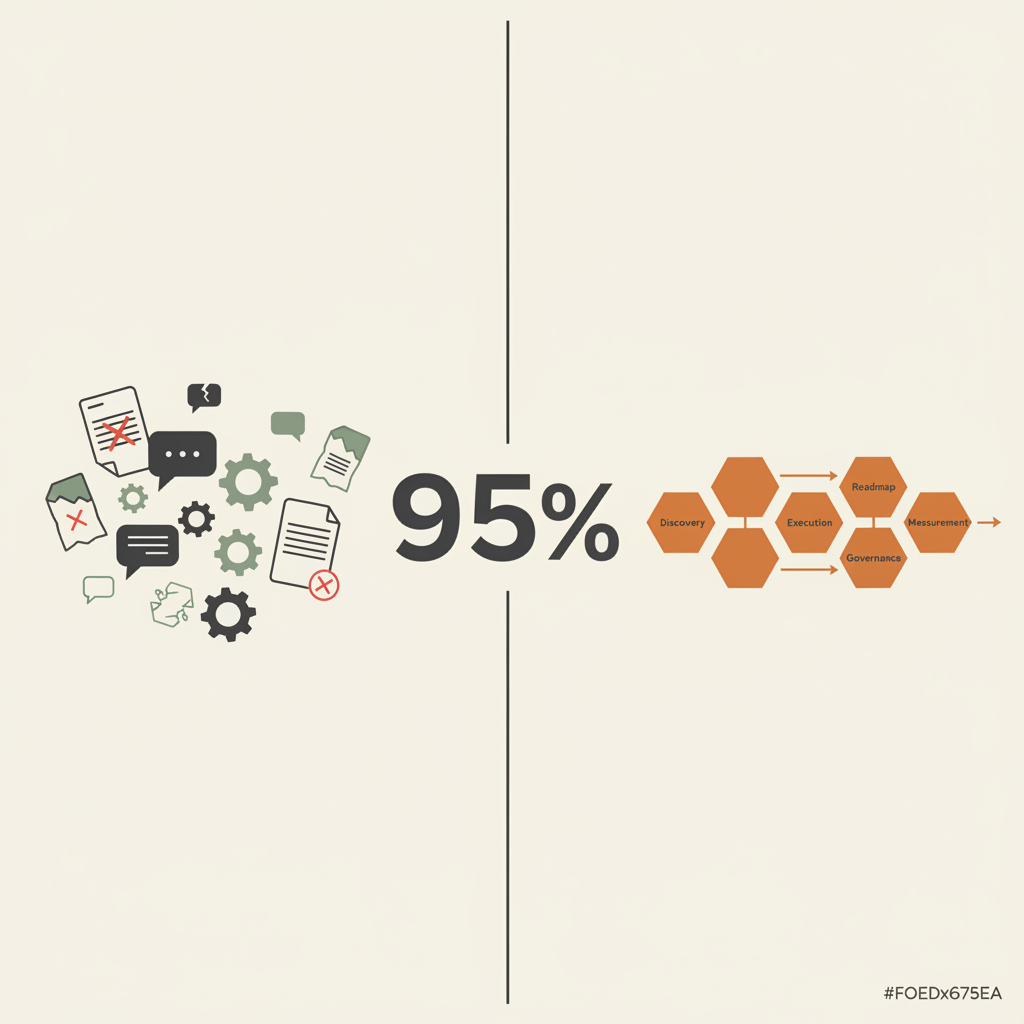

Why 95% of AI Projects Fail (And It's Not the Technology)

After working with dozens of teams on AI adoption, the pattern is clear: the technology works fine. The failure isn't technical. It's organisational discipline.

Tim Clark

Co-founder · 27 February 2026 · 5 min read

TL;DR

AI project failure isn't a technology problem. It's a discipline problem. Without systematic discovery, measurement, governance, and training, even brilliant AI tools fail. The organisations that succeed treat AI adoption like any other critical business capability: structured, measured, and sustainable.

You could hand the world’s best AI tools to your organisation and you’d still fail 95% of the time.

I know that’s a bold claim. But after working with dozens of teams on AI adoption, I’ve watched the pattern repeat: The technology works fine. The failure isn’t technical.

Rand Group research puts the figure at 87% of AI projects never making it to production. Gartner estimates that through 2025, at least 30% of generative AI projects will be abandoned after proof of concept. Whether the number is 87% or 95%, the root cause is the same: it’s almost never the technology.

The Real Problem

Here’s what actually happens:

A leader reads about ChatGPT or Claude and thinks, “We need this.” A team gets excited. Someone sets up an account. They run a few experiments. And then… silence. The project lives in one person’s head. There’s no measurement of what worked. No training for the wider team. No governance framework. No documentation of what was built. When that person leaves or moves to something else, the whole thing collapses.

Or they implement something bigger: a workflow automation or document processing system. It works brilliantly for three weeks. Then nobody maintains it. The prompts drift. The outputs degrade. No one notices because there’s no monitoring. Three months later, someone asks, “Whatever happened to that AI project?” and nobody remembers.

The pattern is consistent: Technology success + organisational chaos = project failure.

Why This Happens

It’s not stupidity. It’s that AI is new enough that most organisations haven’t figured out the discipline required to make it sustainable.

When you implement a new CRM system, you have processes. A CRM vendor gives you implementation guides. Your team gets trained. There’s a change management plan. There’s governance about who can access what. There are quarterly reviews of adoption metrics.

With AI, we’re still in the Wild West. People experiment in isolation. They build something, it works, and then they expect it to just… keep working. Without maintenance, measurement, or documentation.

MIT Sloan Management Review found that 91% of top data managers cited team challenges and change management, not technology limitations, as the main barriers holding them back with AI. McKinsey’s research reinforces this: companies with a formal AI strategy report an 80% success rate in AI adoption, compared to just 37% for those without one.

The constraint isn’t whether ChatGPT can analyse documents. It’s:

- Does your team have a framework for finding AI opportunities systematically?

- Once you build something, who owns maintaining it?

- How do you measure whether it actually delivered value?

- How do you scale from one team experimenting to the entire organisation moving systematically?

- What happens when someone leaves and nobody knows how the system works?

These aren’t technology questions. They’re discipline questions.

What Actually Works

The organisations that successfully adopt AI do something different:

They treat it like any other critical business capability: discovery, planning, execution, measurement, governance, training.

They don’t ask, “Can we use AI?” They ask, “Where will systematic AI use save the most time, reduce the most risk, or improve the most?” They map opportunities. They prioritise. They run pilots with proper measurement. They scale only what works.

They build documentation as they go. They train their teams. They establish governance that enables fast, safe decision-making rather than blocking it.

They measure ROI clearly. Not to prove AI works, but to understand where it’s creating value and where it’s not.

They treat the first person who builds something as a proof point, not the final solution. They systematically spread that knowledge across the team so it survives when people move on.

This takes discipline. But it’s the difference between a single successful AI project and systematic AI adoption that compounds over time. It’s also why we built the AI Native Programme as a structured monthly partnership rather than a one-off engagement. Sustainable AI adoption requires ongoing structure.

How the Platform Helps

The AI Native Platform is built around this exact insight. It’s not trying to replace ChatGPT or Claude (those are tools you’ll use within the platform). It’s infrastructure for the discipline part.

- Discovery Canvas: systematically find where AI matters most in your business, rather than guessing

- Roadmap & Prioritisation: rank opportunities by impact and effort so you invest in the right projects first

- Task Management: keep AI project execution visible across your team

- ROI Measurement: track actual returns, not assumptions, so you know what’s working

- Governance Framework: move fast without fear of chaos, with built-in risk assessments and policy guardrails

- Training Modules: build team capability so knowledge doesn’t walk out the door

Essentially, the platform exists because we realised that the bottleneck for most organisations isn’t the AI tools. It’s the discipline, measurement, and structure around them.

If you want to see what this looks like in practice, the AI Readiness Assessment is a good place to start. It helps you understand how AI-literate your team is, what tools they’ve already used, and how they feel about using AI in your organisation.

What This Means for Your Organisation

If you’re experimenting with AI today, you’ve probably got ChatGPT, Claude, maybe Copilot. Different teams using different tools. No shared framework for what’s working. No measurement of impact. No governance. You’re probably part of the 95%.

The path forward isn’t more tools. It’s systematic adoption. Discovery, measurement, training, governance, scaling.

That’s not sexy. But it’s what actually works.

Interested in understanding where AI could actually matter in your organisation? Get in touch. I’m happy to chat through your biggest opportunities and where the real bottlenecks are.