Governance as Confidence (Not Constraint)

Good AI governance doesn't slow you down. It accelerates you. Risk-based frameworks let teams move fast where it's safe and apply controls where it matters.

Tim Clark

Co-founder · 27 February 2026 · 5 min read

TL;DR

Bad governance creates bureaucracy and drives AI use underground. Good governance is risk-based: minimal controls for low-risk work, strict oversight for high-risk decisions. The result isn't slower teams. It's confident teams with audit trails.

When I mention “governance,” most people immediately think: bureaucracy. Slow. Red tape. “We can’t do this without filling out Form 47 and waiting for committee approval.”

That’s governance done wrong.

Good governance doesn’t slow you down. It accelerates you.

Let me explain.

The Governance Problem (Done Wrong)

Bad governance looks like: “No one can use AI without executive sign-off. All AI decisions go through a committee. All prompts must be reviewed. All outputs must be audited.”

This stops experimentation. It kills momentum. It makes your organisation move like a tank through mud.

So teams bypass it. They use ChatGPT in ways you don’t know about. They build AI systems outside your frameworks. They hide things because the official process is too slow.

That’s not safety. That’s the opposite. That’s fragmentation and risk. Deloitte’s research highlights exactly this problem. Unsanctioned AI deployments by individual teams create governance blind spots that are far more dangerous than the risks governance was meant to prevent.

And the numbers back this up: while 75% of organisations have established AI usage policies, only 36% have adopted a formal governance framework, according to the 2025 AI Governance Benchmark Report. Having a policy without a framework means no consistent roles, controls, monitoring, or enforcement. It’s governance theatre.

The Governance Insight

Here’s what I’ve noticed in organisations that are winning:

They don’t have less governance. They have smarter governance.

They ask: What’s actually risky? And what’s not?

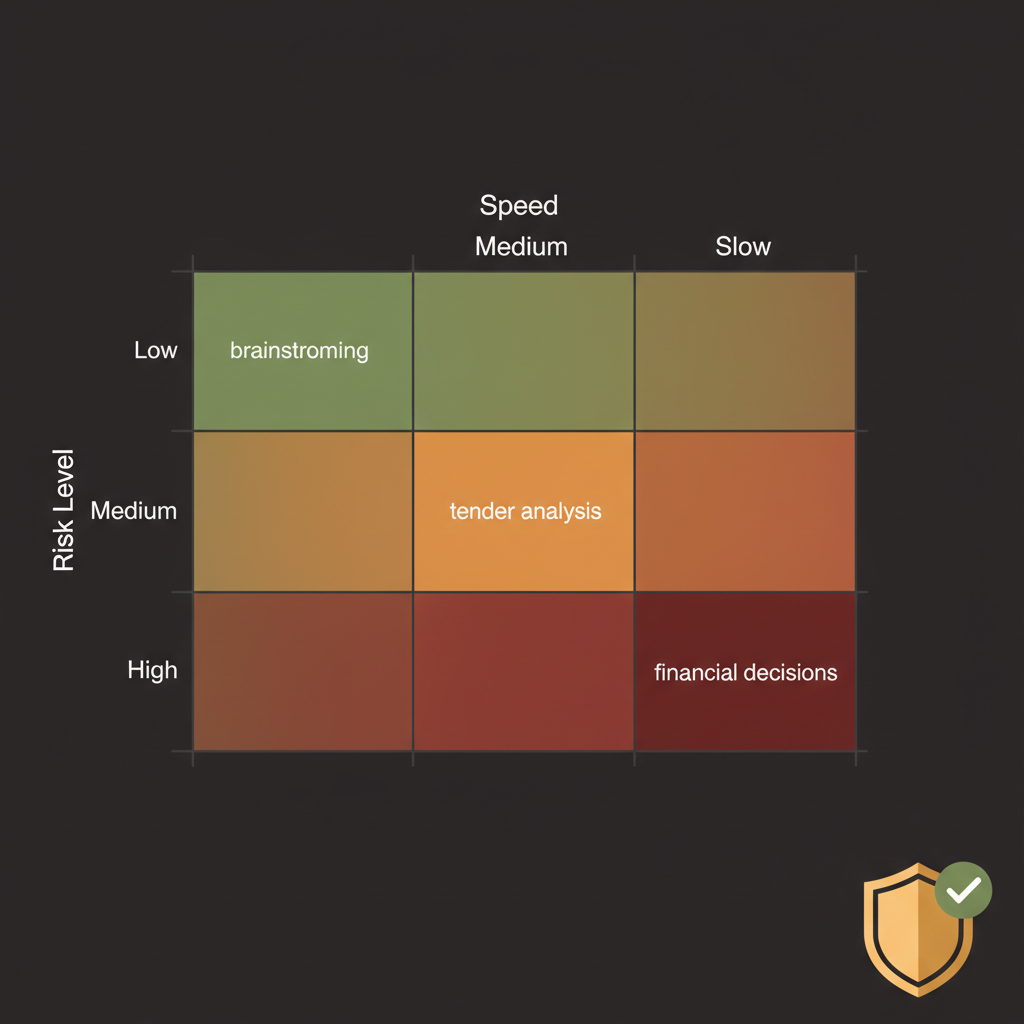

Using ChatGPT to brainstorm marketing copy? Low risk. Let the team do it. No governance needed.

Using an AI model to flag fraudulent transactions? High risk. That needs governance. That needs an audit trail, oversight, validation.

Storing customer data in a third-party AI system? High risk. Governance required.

Processing internal meeting notes? Low risk.

The organisations that move fast don’t have no governance. They have risk-based governance. They apply strict controls where it matters and trust teams where it doesn’t.

What Risk-Based Governance Looks Like

Instead of one process for everything, you have levels:

Green (Low Risk): Team uses AI tools, minimal controls needed. Completion timestamp. That’s it.

Yellow (Medium Risk): Team uses AI, but there’s documentation of the prompt, the output is reviewed by a second person, there’s an audit trail. Takes an extra 30 minutes per project.

Red (High Risk): Governance committee review. Full audit trail. Compliance checklist. Formal sign-off.

What determines the level? Risk factors:

- Is personal data involved?

- Is this a legal or regulatory decision?

- Could bad output cause real harm?

- Are we trusting AI to make a decision, or just to inform one?

- How transparent is the AI’s reasoning?

Most projects are green or yellow. A few are red.

And here’s the key: Teams know upfront which level they’re in. They’re not surprised halfway through. They know what controls they need and plan accordingly.

This is why the AI Native Platform includes a built-in governance framework — so your team can classify projects by risk level and apply the right controls without slowing down the low-risk work. Gartner’s research found that organisations with AI governance platforms are 3.4 times more likely to achieve high effectiveness in AI governance than those without.

How This Enables Speed

Let me give you an example.

Tender analysis: Is it high risk?

Some organisations say yes: “We’re using AI to evaluate proposals. That’s a decision that could be wrong. Governance required.”

Other organisations think differently: “The AI reads the tender, extracts information, and makes a recommendation. A human reads the AI output and makes the decision. The human is responsible. The AI is a tool.”

Different risk assessment. Yellow governance, not red.

With yellow governance, you can:

- Launch projects in 4 weeks, not 12 weeks

- Scale across teams, not wait for approval

- Iterate and improve, not freeze specs

- Trust teams, not create bottlenecks

And you’re still safe. You still have documentation. You still have an audit trail. You still have review. You just don’t have bureaucracy.

The data supports this approach. The Cloud Security Alliance found that organisations with comprehensive AI governance are nearly twice as likely to successfully adopt advanced AI, with 46% adoption versus just 25% for those with only partial guidelines. Good governance doesn’t slow adoption. It enables it.

The Confidence Part

Here’s why this matters for your board and executives:

Bad governance makes it seem like you’re being careful, but you’re creating risk.

Good governance means your leadership can move fast with confidence.

“We evaluated tenders with AI support. The AI recommendation was reviewed by our procurement lead. Here’s the audit trail of the AI analysis. Here’s the decision. Here’s the reasoning.”

That’s auditable. That’s defensible. That’s confidence.

Compare to: “We used ChatGPT to help. I think it worked well. Here’s the outcome.”

That second one is risky. Not because you used AI. Because you can’t explain your process.

This is where structure matters, and it’s one of the reasons the AI Native Programme includes governance setup as part of the monthly partnership. You don’t need to build a framework from scratch. You need one that fits your risk profile and enables your team to move.

What This Means for Your Organisation

If you’re worried about AI risk, good governance is the answer. Not “no AI.” Not “secret AI.” Structured, risk-based governance.

It lets you move confidently. Your team knows what’s expected. Your executives know what’s auditable. Your customers (if relevant) know you’re being responsible.

It’s not bureaucracy. It’s confidence.

And it’s not “no governance vs. full governance.” It’s “smart governance that enables speed where it’s safe and controls where it matters.”

That’s how you actually win with AI.

Keen to talk about what a governance framework might look like for your organisation? Get in touch. It’s usually a shorter conversation than you’d expect.